AI Distillation Attacks: How Some Companies Steal AI Brains

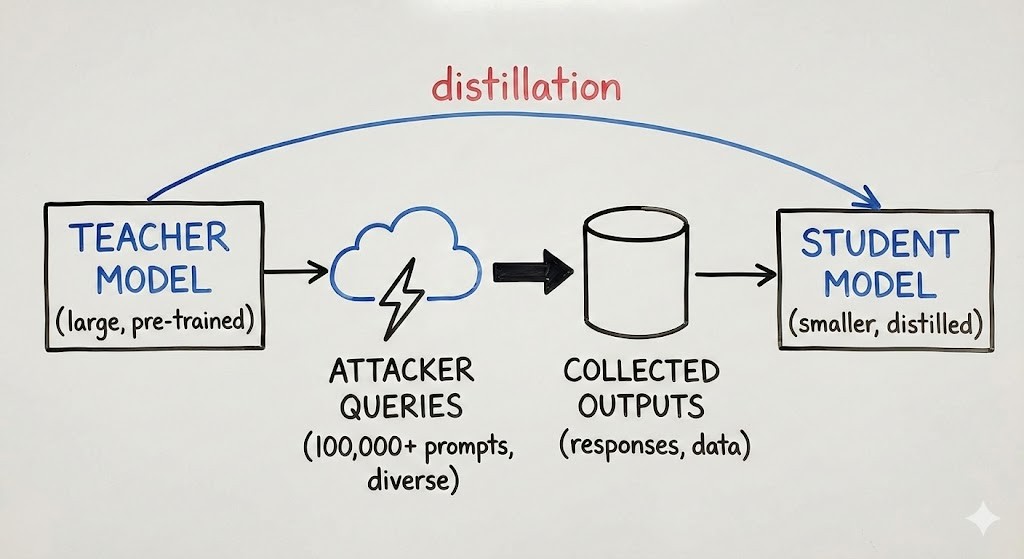

First, there is a new way to steal technology. It is called AI distillation attacks. Specifically, some companies use a smart AI to teach their own dumber AI. They ask the smart AI thousands of questions and then they record the answers. Consequently, this helps them copy the smart AI’s “brain” for free. In fact, this saves them years of hard work and millions of dollars.

How Does an AI Distillation Attack Work?

To illustrate, think of it like a student copying a teacher. The student asks the teacher to explain every step of a math problem. Next, the student writes down every word. Soon, the student can do the math just like the teacher. Similarly, in AI distillation attacks, a rival AI copies the logic of a better AI. It learns how to think by watching the better model.

The Secret of “Showing Your Work”

Furthermore, when an AI explains its logic, it gives away its secrets. Because of this, AI distillation attacks are very easy for hackers to do.

![]()

Anthropic Finds Big Groups Stealing Knowledge

Recently, Anthropic found that some big labs were doing this. These labs were not just curious; rather, they were running giant machines to drain Claude’s smarts. Moreover, these AI distillation attacks were used to build their own models. They did this by taking the hard work Anthropic already finished.

![]()

Why This Is Bad for Safety

However, you might think copying is okay. But there is a big risk. For example, when a model is stolen through AI distillation attacks, the safety rules often break. As a result, the new AI might be very smart but also very dangerous. It might not have the “safety brakes” that the original AI had.

How Do We Spot These Attacks?

Initially, it is hard to see these attacks. They look like normal questions. Nevertheless, Anthropic looks for “digital fingerprints.” They look for patterns that do not look human. If one account asks 10,000 hard questions in a row, it is likely one of many AI distillation attacks.

Watching for Weird Patterns

Just like a bank watches for stolen cards, AI companies watch for “hungry” accounts. These accounts try to suck out too much data.

Stopping the Thieves: Slowing Them Down

One way to stop AI distillation attacks is to slow them down. If a user asks too many deep questions, the AI can stop answering. Thus, it becomes too slow and too expensive to steal the “brain.” This helps keep the technology safe.

Mixing Up the Answers to Protect Data

Another trick to stop AI distillation attacks is to change the answers a little bit. The answer is still correct, but the explanation is slightly messy. While this does not bother a person, it makes it very hard for another AI to learn from it. It is like a teacher using messy handwriting to stop a cheater from copying.

AI Theft Is Now a Global Problem

Actually, this is not just a fight between two companies. It is a fight between countries. Since AI is very powerful, stopping AI distillation attacks is now a matter of national safety. No country wants its best tools copied by a rival.

The Need for Better Laws

Currently, the rules are not clear. Anthropic wants leaders to make new laws. Essentially, these laws should treat AI distillation attacks like stealing a secret. If it is too easy to copy an AI, companies might stop building new ones.

What Happens Next?

In conclusion, the fight against AI distillation attacks is just starting. As thieves get smarter, the “good guys” must build better walls. Ultimately, this battle will decide who builds the smartest and safest AI in the world.